Every week a new AI tool arrives with claims that sound identical to the last one. Most do not survive contact with real work. When Manus AI launched and the hype cycle kicked off, I decided to skip the Twitter takes and run a structured test instead. Seven working days. Real client deliverables. Documented results. Here is what I found.

What Is Manus AI, Actually?

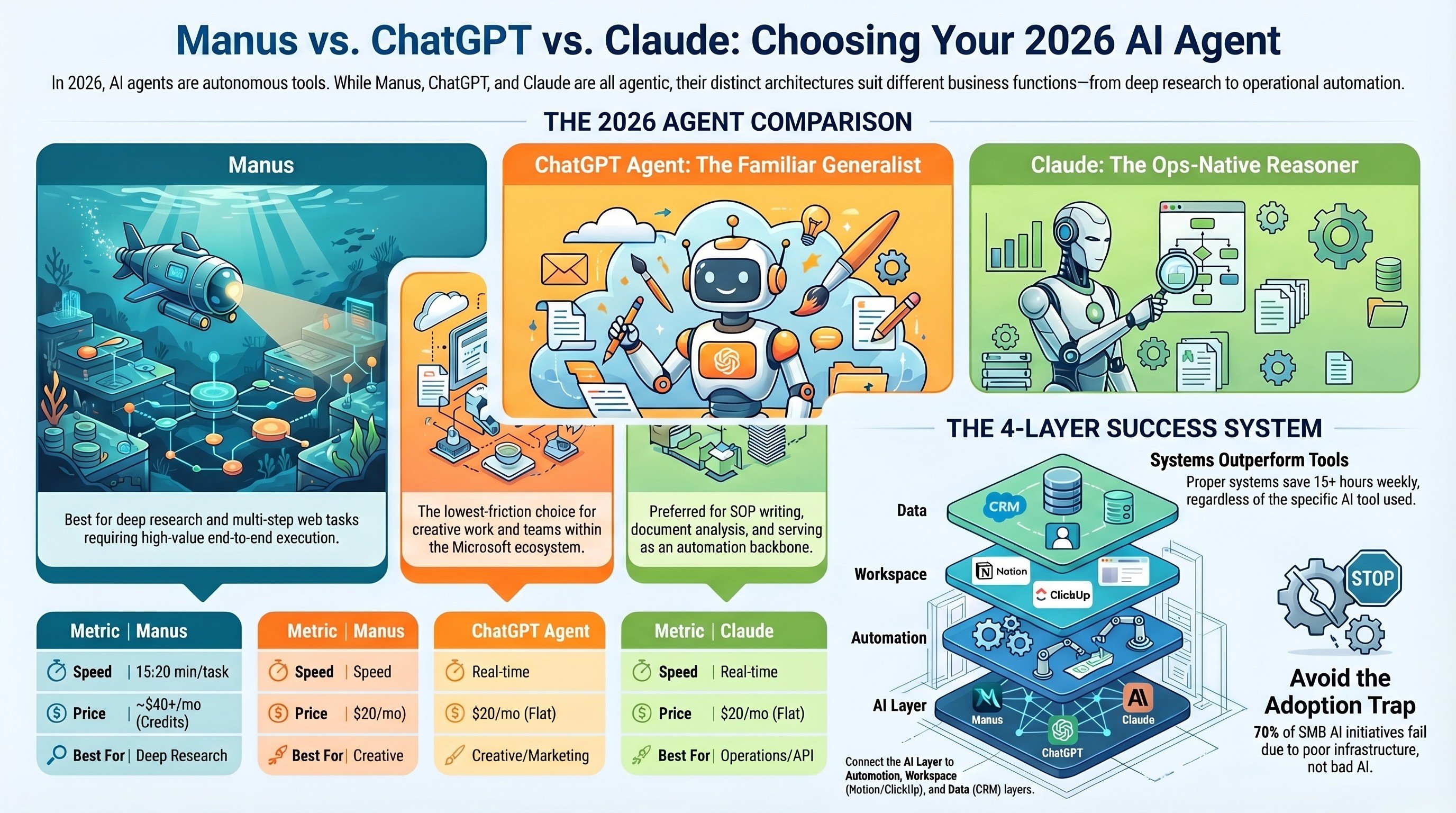

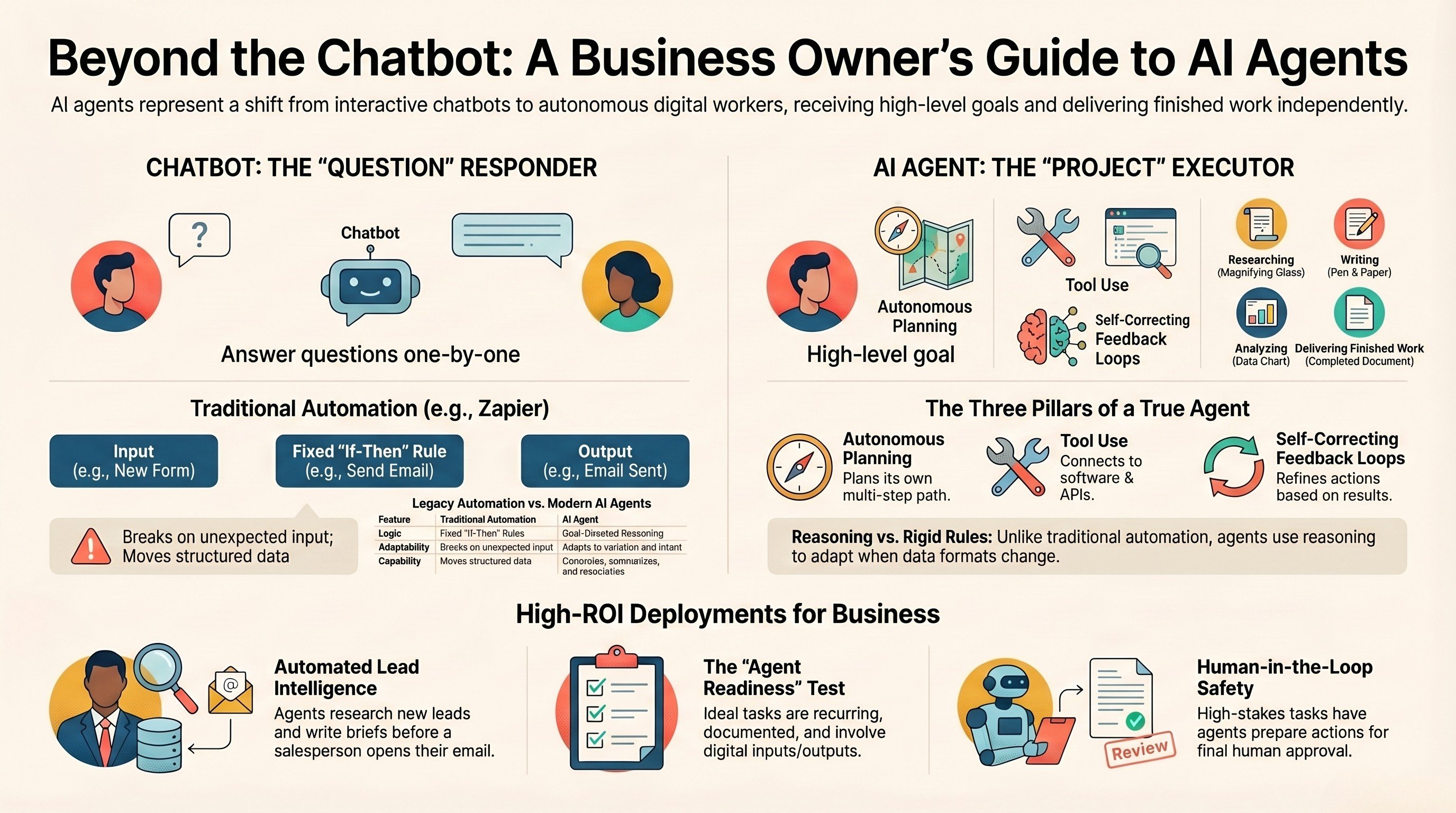

Manus AI is not a chatbot. That distinction matters more than it sounds. You give it a goal, it breaks the goal into steps, executes those steps using tools (browser, code interpreter, file system), checks its own output, and delivers a finished result. The analogy: the difference between asking an assistant a question versus handing them a project.

IV Consulting context: We test every major AI release against real client work. Manus is the first tool in 18 months that genuinely changed how we approached a task category — not because it was perfect, but because it removed a category of work entirely on specific task types.

Day 1: Competitor Research Brief

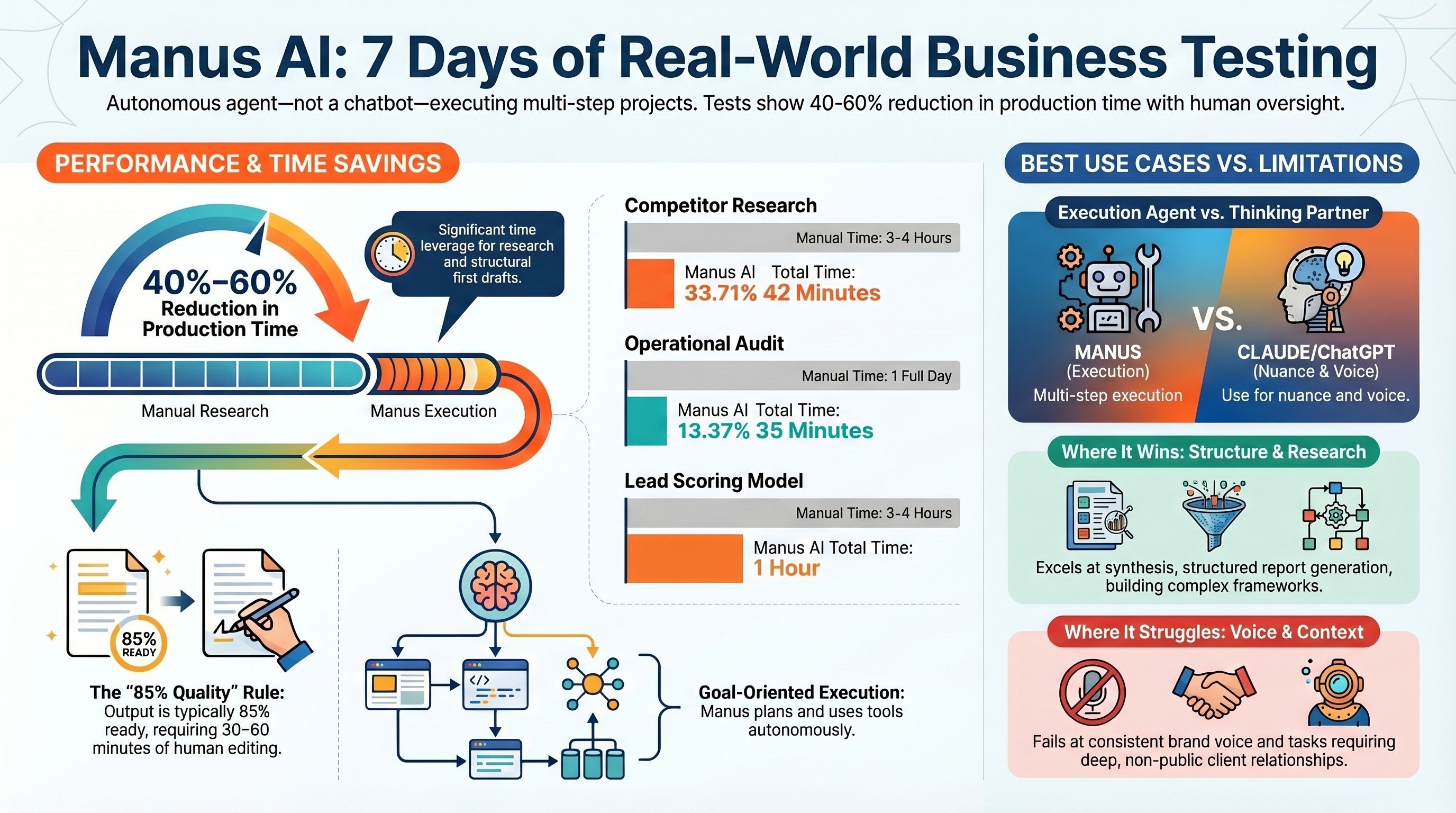

Task: identify top 5 competitors, summarise positioning and pricing, flag gaps a client could exploit. Manus browsed multiple tabs autonomously, scraped competitor sites, pulled pricing pages, read G2 reviews, cross-referenced LinkedIn positioning. Total time: 22 minutes with zero prompting after the initial task.

The output was 85% of what I would have produced manually — in about 15% of the time. I spent 20 minutes editing. Net result: a task that takes me 3-4 hours manually took 42 minutes total.

Verdict: Strong. Budget 30-45 minutes of review time on top of the Manus run. Do not publish unedited.

Day 2: Outbound Email Sequence

Task: write a 5-email cold outreach sequence targeting operations directors at logistics companies. Three of the five emails were genuinely punchy and non-generic. Two needed a full rewrite. Still a 60% time saving versus writing the sequence manually.

Verdict: Good starting point, not a finished product. Plan 40-60 minutes of editing on a 5-email sequence.

Day 3: Operational Audit Report

I gave Manus a messy internal brief — team size, tool stack, rough pain points. Task: produce a structured operational audit with prioritised automation opportunities. Manus produced a 12-section report with workflow maps, automation recommendations ranked by ROI, and a phased rollout timeline. It correctly identified that the client's biggest bottleneck was between their CRM and invoicing tool — something I had noticed but had not explicitly flagged. Total edit time: 35 minutes. This is a task that takes me a full day manually.

Verdict: Outstanding. Structured report generation from messy inputs is where autonomous AI agents genuinely change the economics of knowledge work.

Day 4: LinkedIn Content Calendar

Task: build a 30-day LinkedIn content calendar with post themes, hooks, and format recommendations. Structure: perfect. Quality: uneven. About 70% of hooks were genuinely strong. The remaining 30% were LinkedIn cliches that needed replacing.

Verdict: Solid for planning, inconsistent for execution. Best used to build the scaffold rapidly, then refine individual pieces before publishing.

Day 5: Lead Scoring Framework

Task: design a lead scoring model with weighted criteria, tier thresholds, and a CSV template for the sales team. Manus produced an 18-criteria scoring matrix across four categories, weighted by category, with tier thresholds and a one-page implementation guide. I adjusted three criteria weights and changed the tier labels — the core framework required no structural changes. A task I would normally charge 3-4 hours of consulting time for was done in under an hour total.

Verdict: Excellent. Structured frameworks, models, and templates are a strong use case. Near-production-quality on first run.

Where Manus Wins and Where It Struggles

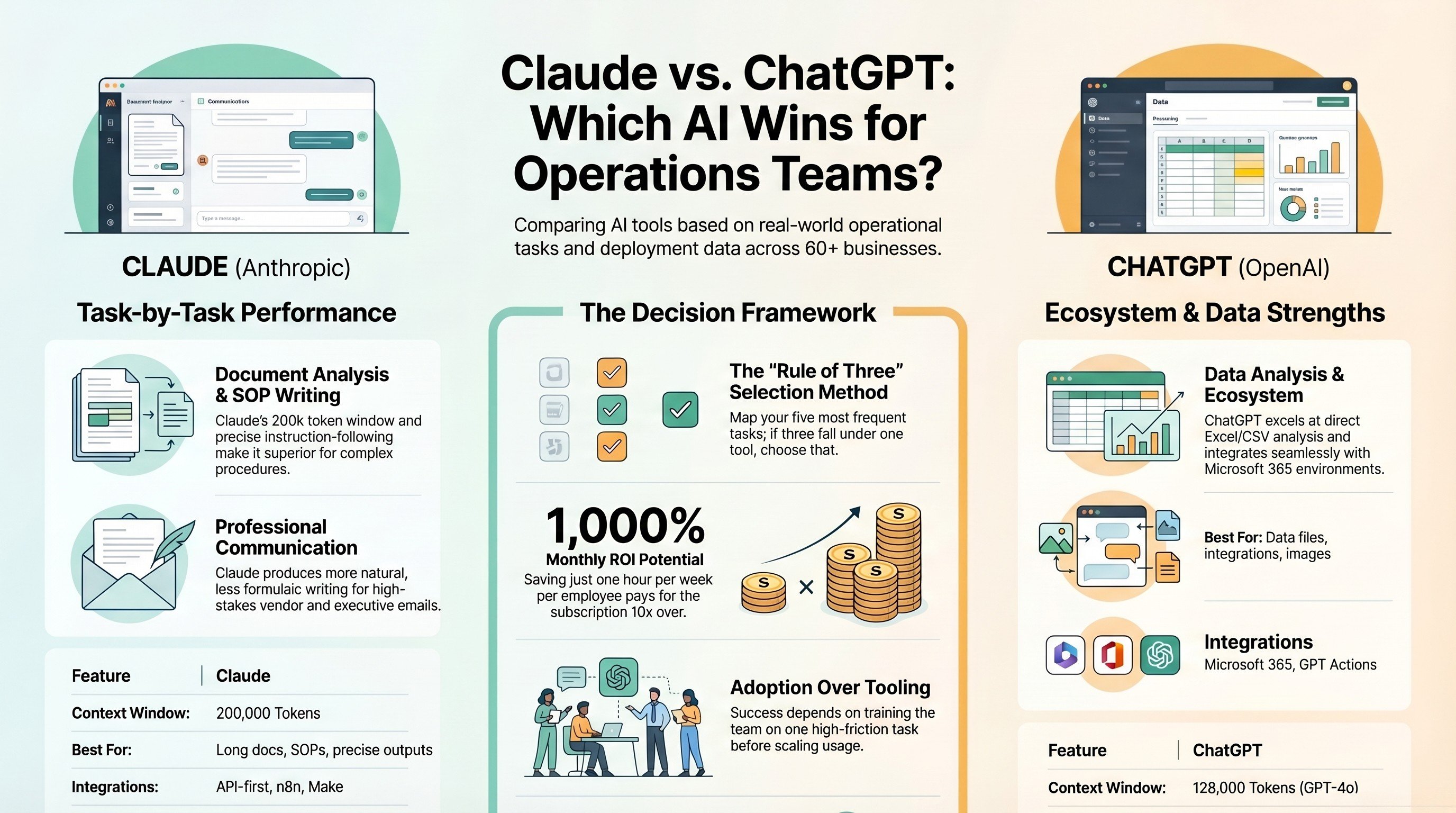

Manus is genuinely strong at: multi-source research synthesis, structured report generation from messy inputs, frameworks and template building, first-draft acceleration on research-heavy work.

Manus struggles with: voice consistency (defaults to slightly formal and generic), high-stakes creative copy, tasks requiring insider knowledge about your client or relationships, speed on simple tasks, accuracy on fast-moving data like recent pricing or news.

The mental model that works: treat Manus like a capable analyst who has been on the job three months. Solid on research and structure. Needs your judgment on strategy and tone. Give them the project, review the output, refine what matters.

Is Manus AI Worth Paying For?

For a consultant billing at $150/hr who spends 8 hours per week on research and report work, a 50% saving is 4 hours per week — $600 per week in recovered billing capacity. At that ratio, Manus pays for itself in the first day of the month. If your work is primarily conversational, creative, or requires tight voice control, the case is weaker.

IV Consulting take: We now use Manus as the first pass on research and report deliverables, then bring Claude in for language refinement and strategy thinking. The combination cuts production time for client deliverables by 40-60% without quality loss on the final output.

FAQs

Is Manus AI suitable for small businesses or just enterprises?

Manus is accessible to businesses of any size. Small businesses benefit most from its research automation, competitive analysis, and multi-step data gathering capabilities — tasks that previously required a dedicated research assistant or significant manual time.

How does Manus AI handle sensitive business data?

Manus processes tasks in isolated environments and does not persistently store your data between sessions by default. Avoid inputting PII, financial credentials, or proprietary information unless you have reviewed the platform's data handling terms carefully.

What tasks is Manus AI NOT good at?

Manus struggles with tasks requiring real-time data (live stock prices, breaking news), deeply nuanced creative writing, and highly specialized technical reasoning. It also requires clear, well-structured task descriptions — vague prompts lead to unfocused outputs. Think of it as a capable research assistant: give it a well-defined brief and it excels.

How does Manus compare to hiring a virtual assistant?

Manus handles research, data gathering, report drafting, and multi-step coordination faster than a VA and at a lower cost for high-volume repeatable tasks. A human VA still outperforms it for relationship management, judgment calls, creative work, and tasks requiring emotional intelligence. Most teams use both.

What is the best way to get started with Manus AI for business tasks?

Start with one research-heavy task you do weekly — competitive analysis, lead research, or market monitoring. Define the task clearly with specific sources, output format, and scope. Run it five times, refine your prompt, then build it into a recurring workflow.

Want the right AI tools for your business?

Book a free 30-minute strategy call. We'll identify your highest-ROI opportunities and give you a build roadmap on the spot.

Book a Free Strategy CallThese are the tools we build with every day at IV Consulting. Most offer free trials — some have exclusive offers for IV Consulting readers.