Most Claude vs ChatGPT articles are written by tech journalists running benchmark tests on academic tasks. Clean prompts. Controlled conditions. No business stakes. That is not how operations teams work. Ops teams work with messy SOPs that are half-outdated, long email threads that need synthesising before anyone can act, and vendor contracts that need reviewing before 5pm. We have deployed both tools inside operations teams at 60+ scaling businesses. Here is what we have actually observed.

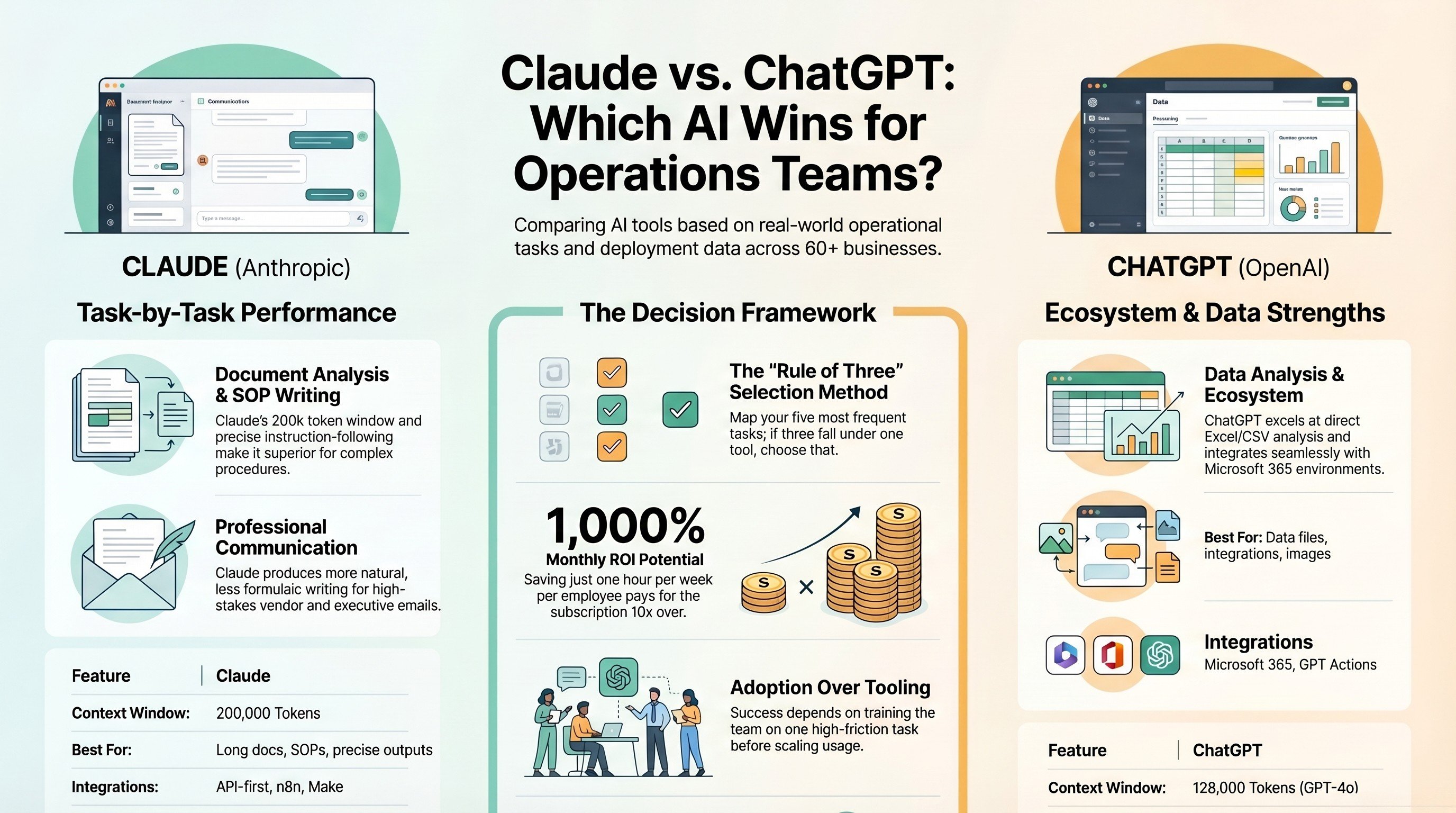

At a Glance: Claude vs ChatGPT for Ops Teams

| Dimension | Claude (Anthropic) | ChatGPT (OpenAI) |

|---|---|---|

| Context window | 200,000 tokens | 128,000 tokens (GPT-4o) |

| Instruction-following | Very high — follows multi-step conditional instructions reliably | Good — occasionally drifts on complex prompts |

| Best for | Document analysis, SOPs, structured outputs, ops writing | Data analysis, Microsoft 365, creative work, plugin ecosystem |

| API for automation | Best-in-class for n8n/Make workflows | Strong via GPT Actions |

Task 1: Reviewing and Summarising Long Documents

Claude handles this category better than any other AI available. Its 200,000-token context window means it reads the entire document, not a truncated version. More importantly, it follows precise extraction instructions: "pull every SLA commitment, flag every liability clause, list every renewal date." The output is structured and accurate. ChatGPT performs competently but the smaller context window means very long contracts may be truncated. For most documents under 80 pages, both tools work. For extended policy libraries or multi-document synthesis, Claude has a clear edge.

Winner: Claude.

Task 2: Writing and Editing SOPs

Both tools write competent SOPs. The difference is in precision. When you give Claude a detailed prompt — use numbered steps, include a decision tree for exceptions, flag steps requiring manager approval — it follows all instructions consistently. ChatGPT follows most instructions but on complex multi-part prompts, one or two elements often drift by the end of the document. For SOP editing, both tools perform well — Claude's edits tend to be more surgical, ChatGPT rewrites more broadly.

Winner: Claude for writing from scratch. Tie for editing.

Task 3: Email Drafting and Communication

Claude's writing is less generic by default. It does not reach for the same phrases that populate most AI email drafts. When briefed well, it writes the way a senior professional would: direct, specific, appropriate to the relationship. ChatGPT produces solid drafts but has a stronger tendency toward filler phrases and unnecessary softening language. This is not fatal — one editing pass removes it — but it adds friction.

Winner: Claude. Consistently less generic, better tone control, fewer editing passes.

Task 4: Data Analysis and Reporting

ChatGPT has a significant advantage here: the Code Interpreter (Advanced Data Analysis) feature. You can upload a CSV or Excel file and ask it to run analysis, generate charts, and export results — all within the chat. Claude does not have a native equivalent. For teams that need to analyse spreadsheet data directly, this is a meaningful gap. For written commentary on data — turning numbers into a board report or client update — Claude produces more polished output.

Winner: ChatGPT for data analysis with file uploads. Claude for written data narrative.

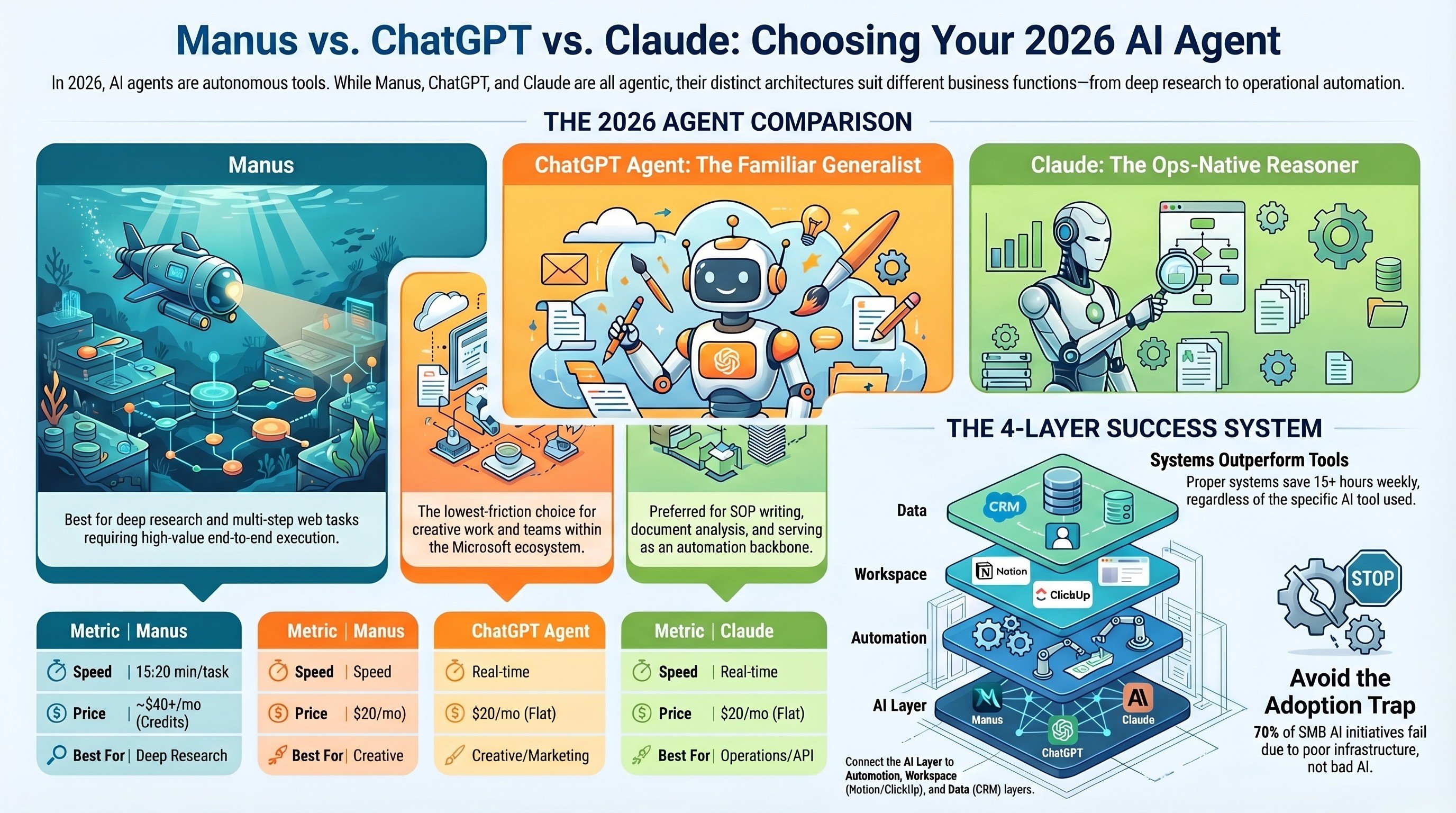

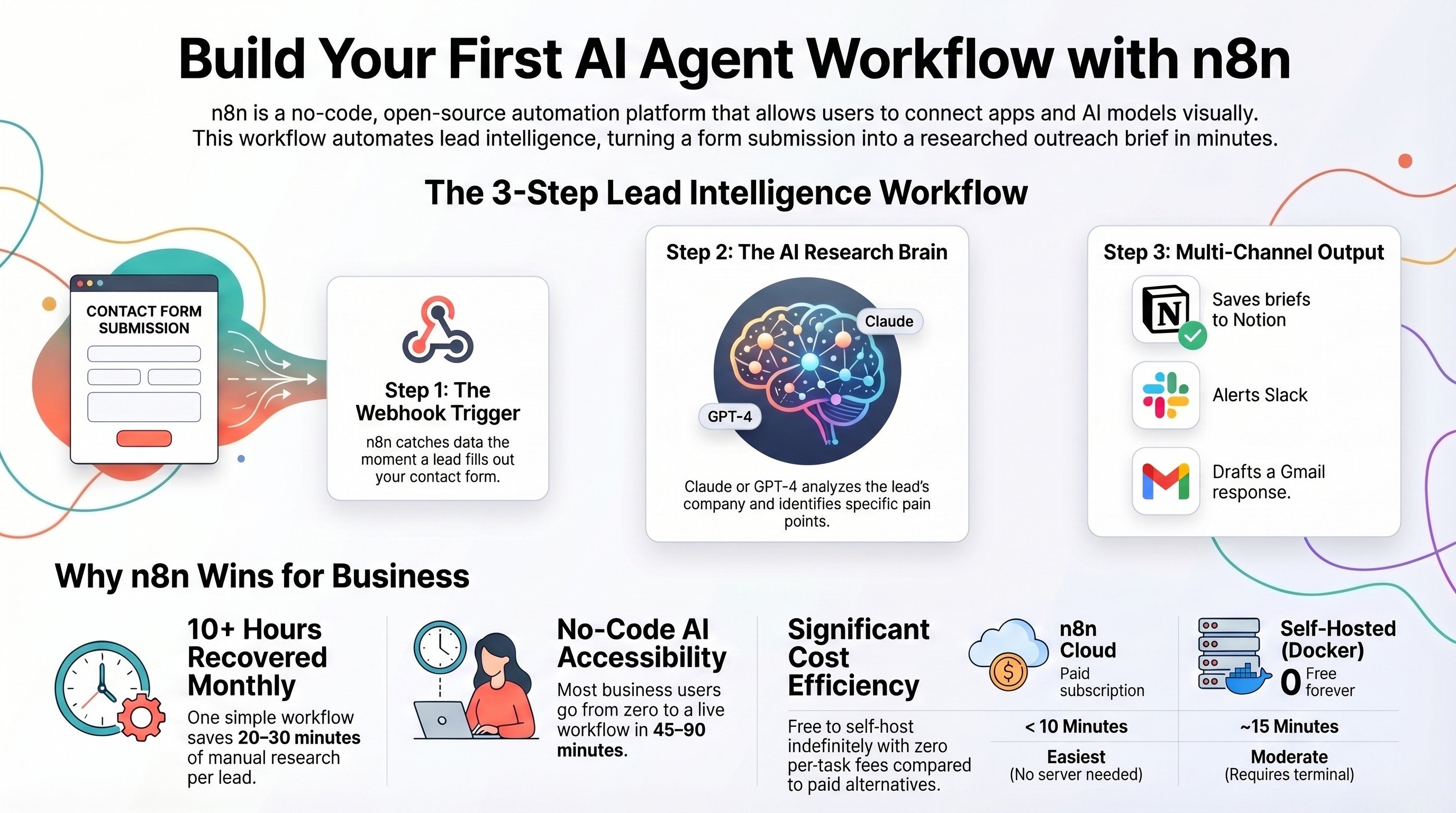

Task 5: Workflow Automation via API

For teams building AI-powered workflows in n8n or Make, Claude's API is the most stable, predictable, and well-documented. It is the brain we put inside most of our clients' automation stacks. ChatGPT is strong via GPT Actions and has the wider native integration ecosystem — especially for Microsoft 365 teams.

Winner: Claude for custom automation workflows. ChatGPT for Microsoft 365 and native integrations.

The Decision Framework

List your five most time-consuming recurring ops tasks. Map each to the winning category above. If 3 or more fall in the Claude column, start with Claude. If 3 or more fall in ChatGPT, start there. The Microsoft 365 question usually breaks ties.

Choose Claude if your team: works with long documents regularly, needs reliable instruction-following on complex prompts, produces significant client-facing written communication, is building custom AI workflows via API.

Choose ChatGPT if your team: needs to analyse Excel or CSV files directly in the AI interface, is embedded in Microsoft 365, wants the broadest plugin ecosystem, is starting from scratch and wants the most name recognition for team adoption.

The ROI Reality

Both Claude Pro and ChatGPT Plus cost $20/month per user. For a 10-person ops team each saving 1 hour per week at $50/hr blended rate, the ROI is $2,000 per month on a $200 investment. The question is not whether to pay for AI tools. The question is which tool delivers that hour fastest for your specific workload.

IV Consulting take: We recommend Claude as the primary AI tool for most operations teams. The instruction-following precision and document handling quality translate directly to fewer errors and less rework — the highest-value outcome in ops work. We add ChatGPT for teams with heavy data analysis or Microsoft 365 requirements.

FAQs

Should operations teams use Claude or ChatGPT as their primary AI tool?

For most operations tasks — process documentation, SOP writing, data analysis, and email drafting — Claude edges out ChatGPT due to its longer context window and stronger reasoning on nuanced operational problems. For coding, integrations, and tasks requiring the plugin ecosystem, ChatGPT is stronger. Many operations teams use both depending on the task type.

How do I integrate Claude or ChatGPT into our existing operations workflows?

The most practical integration paths are: direct API integration into your PM tool for in-context AI, automation platforms like Make or n8n to trigger AI tasks within workflows, and Claude Projects or Custom GPTs for team-specific knowledge bases. IV Consulting builds these integrations for SMBs — see our AI & Automation services.

Is Claude or ChatGPT better at writing SOPs and process documentation?

Claude consistently produces cleaner, more structured long-form documentation with better adherence to tone and formatting instructions. For SOPs, process maps, and operational guides, Claude is our recommendation. ChatGPT's Custom Instructions feature can narrow this gap if you invest time in prompt engineering.

What data can I safely share with Claude or ChatGPT for operations tasks?

Internal process descriptions, anonymized workflow examples, and general operational challenges are fine to share. Avoid sharing customer PII, financial account details, proprietary product specifications, or anything covered by an NDA without confirming it complies with your company's AI use policy.

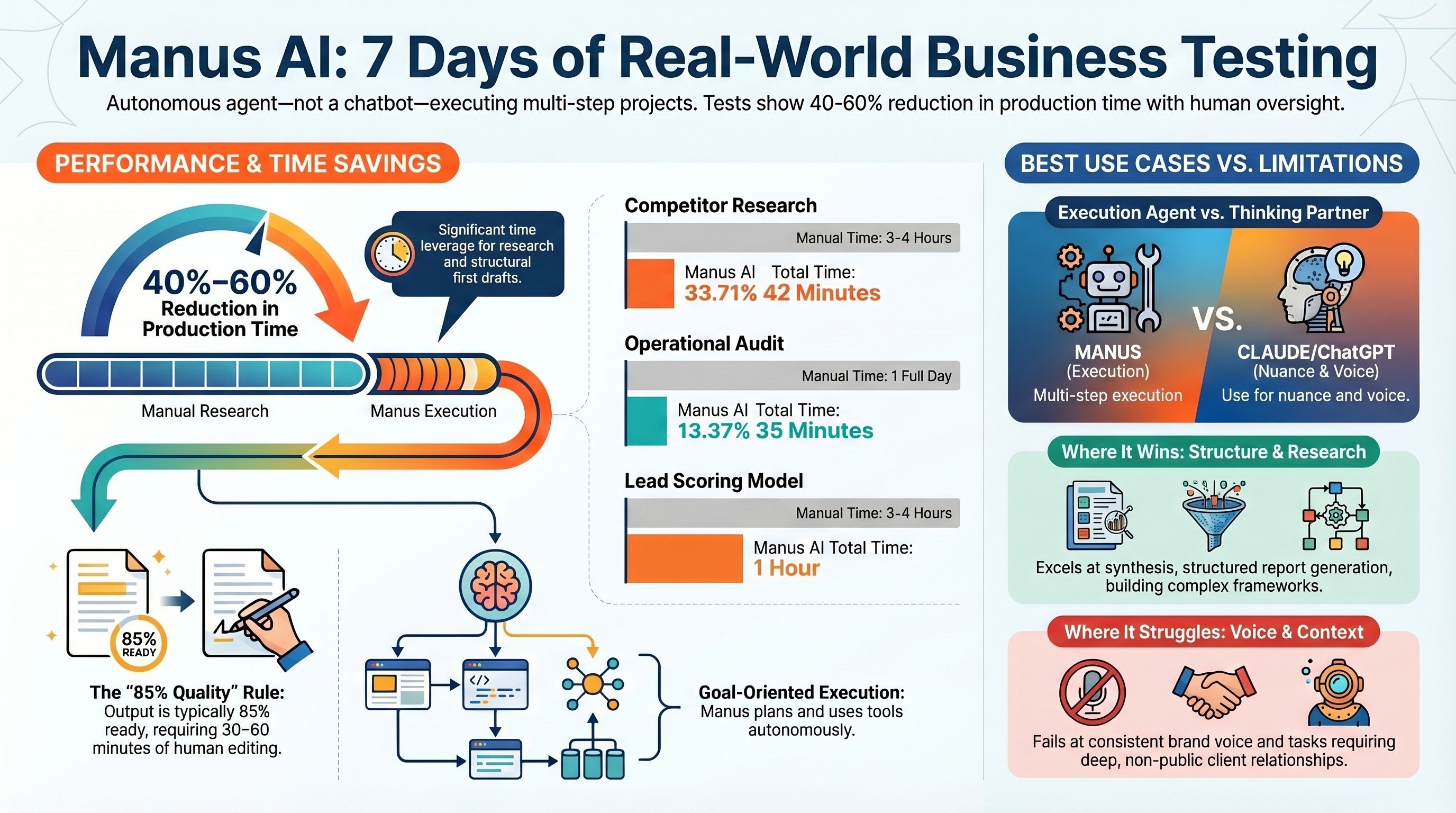

How much time can operations teams realistically save with AI tools?

Based on our client work, operations teams using AI assistants consistently save 5-10 hours per week per person on documentation, reporting, email drafting, and analysis tasks. The key is structured implementation — ad-hoc AI usage saves 1-2 hours; integrated workflows with proper prompts save significantly more.

Not sure which AI fits your ops team?

Book a free 30-minute strategy call. We'll identify your highest-ROI opportunities and give you a build roadmap on the spot.

Book a Free Strategy CallThese are the tools we build with every day at IV Consulting. Most offer free trials — some have exclusive offers for IV Consulting readers.